Automate Conversations in Context

While Trust Studio defines the conversational rules (Build), Trust Engine carries them out in production (Run).

Leveraging rich APIs and connectors, Trust Engine gathers the required contextual data and compiles the required instruction for the appropriate next response or action.

Runtime Intelligence

Context-Aware Execution

Trust Engine integrates with multiple data sources to ensure every response is accurate, compliant, and contextually relevant.

Multi-Source Data Integration

Gathers customer data from a CRM, intent and sentiment data from an LLM, and/or response data from the customer

Real-Time Processing

Compiles instructions and executes responses instantly across all channels

Rule-Based Execution

Gathers customer data from a CRM, intent Applies Trust Studio rules to ensure every action stays within defined guardrails

Unified LLM Management

Enterprise-Grade AI Governance

Trust Engine brings unified LLM management, built-in safety, and enterprise governance to unlock AI value while mitigating risks.

This allows you to select your LLM to optimize cost and performance and maintain service continuity.

LLM Flexibility

Select your LLM to optimize cost and performance for different use cases

Service Continuity

Maintain uninterrupted service with automatic failover and load balancing across providers

Built-In Safety

Enterprise governance ensures AI outputs always comply with policies and regulations

Ready to Power Your Conversational AI?

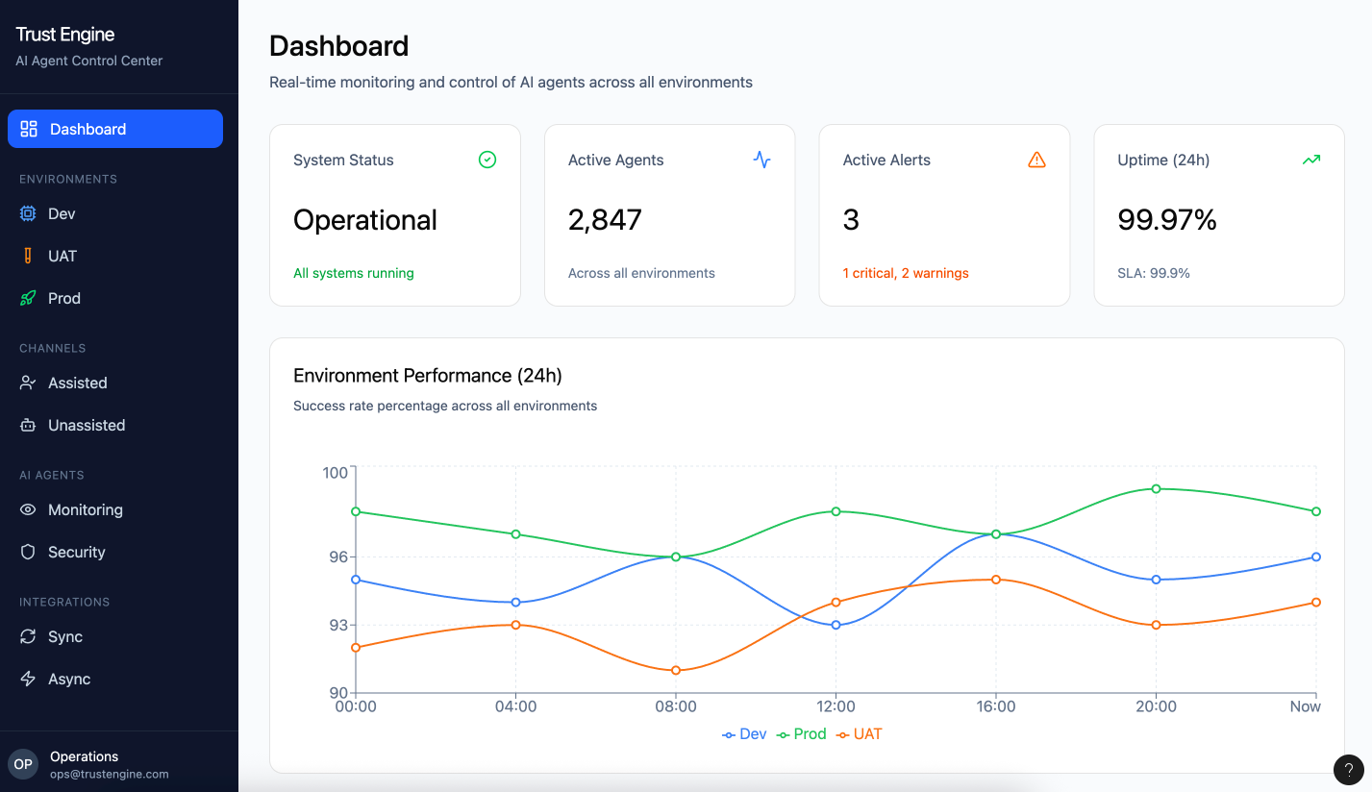

See how Trust Engine executes intelligent conversations at scale with enterprise-grade reliability