Trust is proving to be the critical issue

In regulated industries, trust is not a feeling.

Its an operational property that must be engineered into every unassisted conversation

Accuracy and expertise

in every response.

Every decision traceable

and provable.

Consistent across every

channel and customer.

Always aligned with

rules, policies, and obligations.

Fair, transparent,

compliant

Most AI-first platforms simply aren't engineered for trust.

Why Most AI Platforms Fail Where Trust Matters

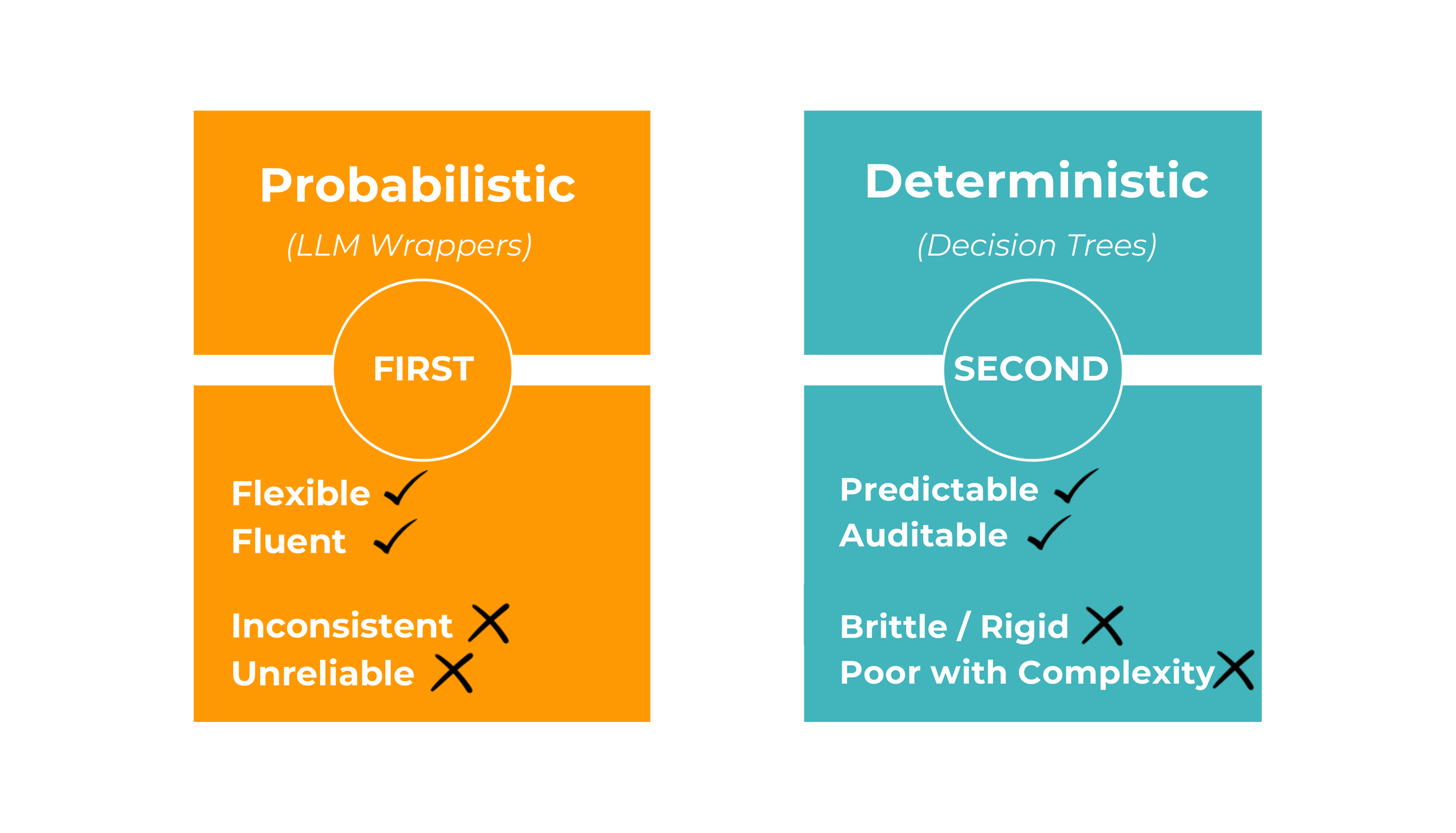

AI-first platforms may work in unstructured environments, but in regulated sectors where compliance, accuracy, and consistency are non-negotiable, they inevitably fail.

Probabilistic

AI

Too unpredictable for trusted decisions

Decision

Trees

Collapse under real-world complexity

Compliance

Risk

Small errors = massive financial & reputational fallout

Customer

Zig-Zags

People change topics, tone, and context mid-conversation

Probabilistic-first platforms may sound fluent, but in regulated sectors fluency without certainty is not enough.

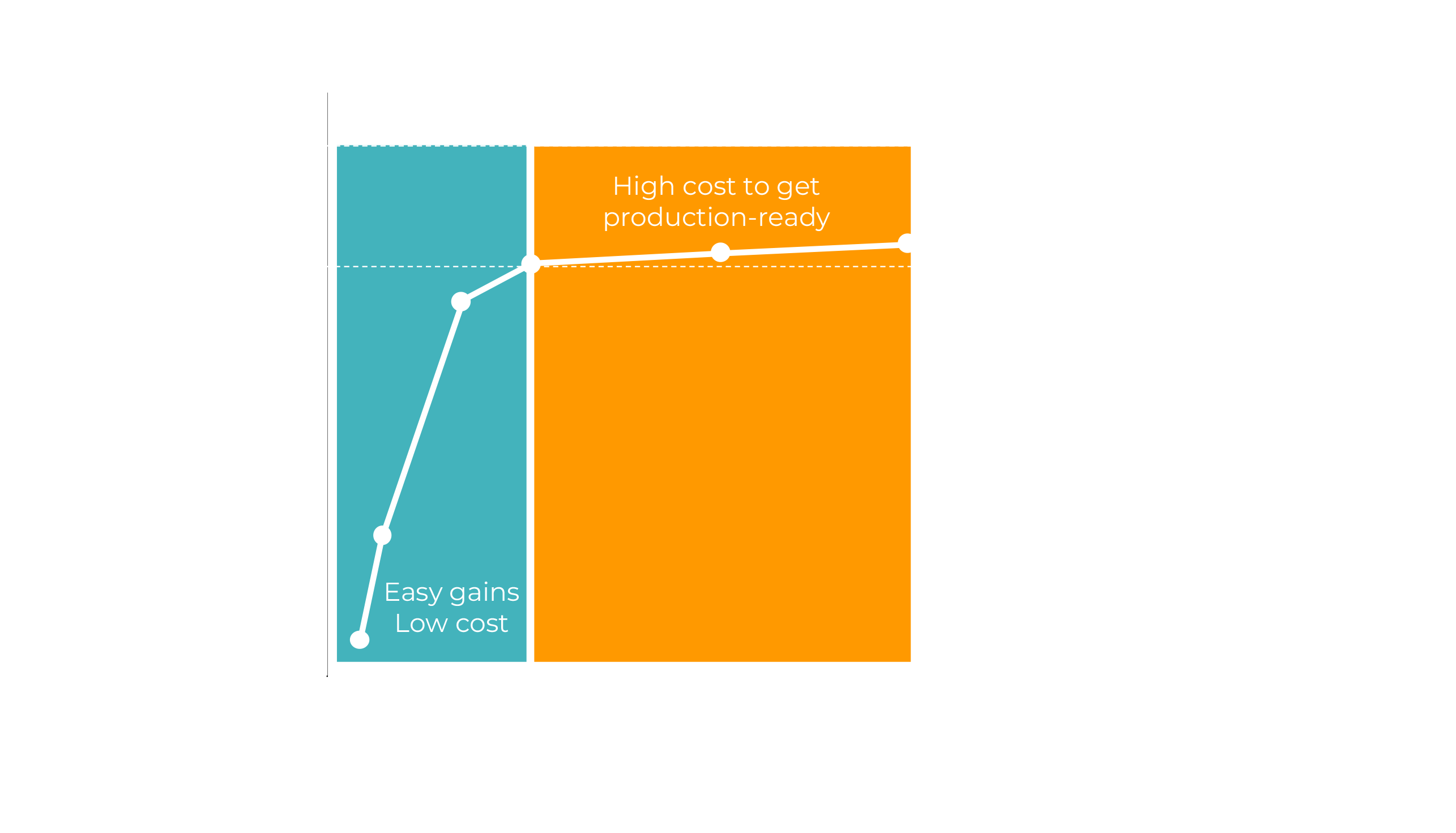

The High Cost of Getting it Right

In regulated industries, "almost right" is still wrong

Most AI platforms hit an invisible barrier: the Pareto Frontier. Easy wins get automated, but pushing for near-perfect accuracy quickly becomes exponentially expensive.

This is why so many projects stall in pilot purgatory. The challenge isn't building a chatbot. It's engineering unassisted automation that can cross the last mile safely, at scale.

The real cost of failure isn't just wasted spend. It's lost customer trust, regulatory risk, and ongoing dependence on live agents.

Ready to see the platform in action?

See how Trust Orchestrator can help you achieve unassisted automation at scale.